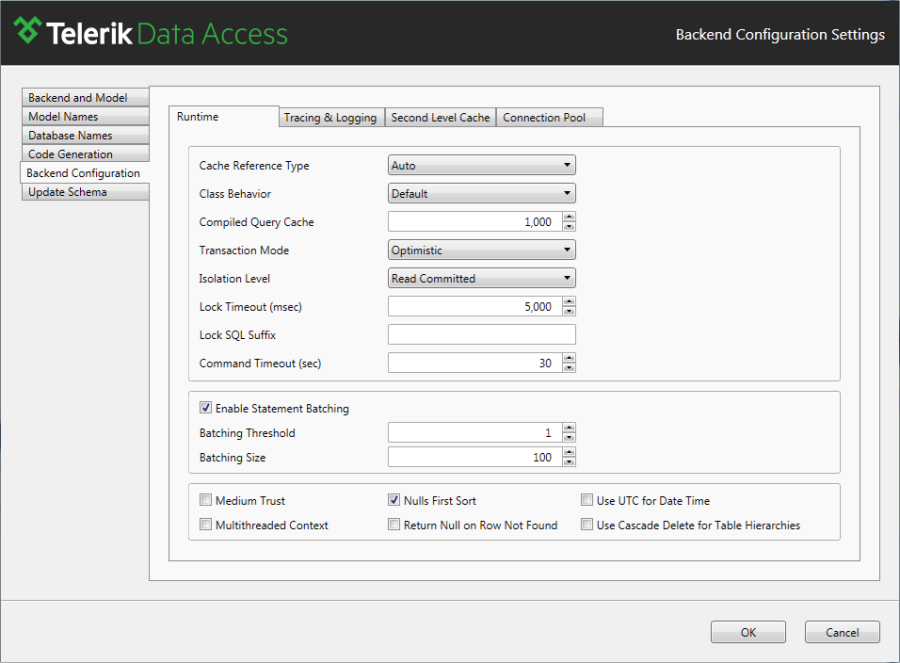

Runtime Configuration

This article is relevant to entity models that utilize the deprecated Visual Studio integration of Telerik Data Access. The current documentation of the Data Access framework is available here.

This topic provides overview of the Runtime Configuration settings.

- Cache Reference Type

- Class Behavior

- Compiled Query Cache

- Transaction Mode

- Isolation Level

- Lock Timeout (msec)

- Lock SQL Suffix

- Command Timeout (sec)

- Enable Statement Batching

- Medium Trust

- Multithreaded Context

- Nulls First Sort

- Return Null on RowNotFound

- Use UTC for DateTime

-

Cache Reference Type - specifies the type of the references maintained by the cache of the OpenAccessContext:

For more information about the Cache Reference Type setting, refer to How to: Configure Garbage Collection.

- AUTO - specifies that references to fetched data are strongly held and references to user instances are weakly held.

- STRONG - specifies that all references can't be reclaimed.

- WEAK - specifies that a reference is maintained using a System.WeakReference allowing the garbage collector to reclaim the object.

-

Class Behavior - defines the class behavior - ReadWrite/ReadOnly/InsertOnly:

- Default or ReadWrite - allows full access to user data. All CRUD (Create, Read, Update and Delete) modifications are allowed.

- Read Only - allows only reading user data. No CUD modifications (Create, Update or Delete) are allowed.

- Insert Only - allows only insert and read operations for objects of this type.

- Compiled Query Cache - controls the maximum number of queries that are kept in the pre-compiled query cache. The pre-compiled query cache is the one that holds already computed translations from LINQ to the corresponding SQL expressions. This property controls the size of the compiled query cache. The default value is 0, which is internally translated to the default size of 1000. Negative numbers will lead to a size of 1000, too. The only reason to increase the value would be that you have more than 1000 different queries for which you need to store the compiled query information.

-

Transaction Mode - controls which objects are locked when pessimistic concurrency control is used. The Commit operation will verify that modified objects have not been updated by another transaction meanwhile.

- Optimistic (default) - concurrency control happens during transaction commit. Transaction conflicts will be detected during Transaction.Commit() and will cause Telerik.OpenAccess.Exceptions.OptimisticVerificationException. Transaction will verify that modified objects have not been updated by another transaction meanwhile. Only available with optimistic transactions.

- Pessimistic Explicit - concurrency control happens during entire transaction. Transaction conflicts will be detected for all reading/modifying methods and will cause Telerik.OpenAccess.Exceptions.LockNotGrantedException to be thrown.

- Pessimistic Locking All - all objects are write-locked when fetched from the data store. Concurrency control happens during entire transaction. Transaction conflicts will be detected for all reading/modifying methods and will cause Telerik.OpenAccess.Exceptions.LockNotGrantedException to be thrown.

- Pessimistic Locking First - first object is write-locked when fetched from the data store. Concurrency control happens during entire transaction. Transaction conflicts will be detected for all reading/modifying methods and will cause Telerik.OpenAccess.Exceptions.LockNotGrantedException to be thrown.

-

Isolation Level - controls the transaction locking and row versioning behavior. The isolation level describes the degree to which the data being updated is visible to other transactions.

The Isolation Level configuration on model level is deprecated. It is recommended to use the ContextOptions.IsolationLevel property of OpenAccessContextBase. You can check the Managing Isolation Level article.

- Unspecified - a different isolation level than the one specified is being used, but the level cannot be determined. If you set the IsolationLevel to Unspecified, the transaction executes according to the isolation level that is determined by the driver that is being used.

- Chaos - the pending changes from more highly isolated transactions cannot be overwritten. Behaves the same way as ReadUncommitted, but checks the isolation level of other pending transactions during a write operation so that transactions with more restrictive isolation levels are not overwritten.

- Read Uncommitted - the Read Uncommitted isolation level allows a task to read data that can be in the middle of being modified by another transaction, uncommitted changes to data in the database. This is also known as dirty read. Values in the data can be changed and rows can appear or disappear in the data set before the end of the transaction.

- Read Committed (Default) - the Read Committed isolation level specifies that a transaction can read only committed changes to data. It cannot read data that has been modified but not committed by other transactions. Data can be changed by other transactions between individual statements within the current transaction. If a transaction needs to read a row that has been modified by an incomplete transaction in another session, the transaction waits until the first transaction completes.

- Repeatable Read - it means that the transaction will lock all the data that is used in the query and another transaction cannot update the data until the current transaction completes. Other transactions can insert new rows that match the search conditions of statements issued by the current transaction. If the current transaction then retries the statement it will retrieve the new rows, which results in phantom reads.

- Serializable - this is the most restrictive isolation level which prevents other user from updating or inserting rows into the data set until the transaction completes. The Serializable isolation level also specifies that statements cannot read uncommitted changes to data in the database.

- Snapshot - in databases, Snapshot isolation specifies that data read by any statement in a transaction will be the transactionally consistent version of the data that existed at the start of the transaction. A transaction executing under snapshot isolation appears to operate on a personal snapshot of the database, taken at the start of the transaction. Data modifications made by other transactions after the start of the current transaction are not visible to statements executing in the current transaction. The transaction itself will successfully commit only if no updates are made to the data by any concurrent updates made since that snapshot.

- Lock Timeout (msec) - when Telerik Data Access cannot grant a lock to a transaction on a resource because another transaction already owns a conflicting lock on that resource, the first transaction becomes blocked waiting on the resources. The Lock Timeout settings allows Telerik Data Access to set a maximum time that a statement waits on a blocked resources.

- Lock SQL Suffix - controls the SQL suffix that is appended for update operations.

-

Command Timeout (sec) - The command timeout determines the wait time before terminating the unfinished Telerik Data Access operations. The Command Timeout setting allows you adjust that time so that long-running database operations are allowed to finish their execution. By default, the value is 30 seconds. Once the timeout is exceeded SQLException will be thrown.

Note that the execution of the long-running operations can be terminated before Command Timeout is reached, due to exceeding LockTimeout and Active Connection Timeout.

-

Enable Statement Batching - enables you to specify if multiple INSERT, UPDATE or DELETE statements with the same structure should be executed as a Single Instruction Multiple Data operation. Enabling Statement Batching will improve performance in cases where the database server is harder to reach, for example, due to a higher latency network. Statement Batching is enabled by default.

Note that INSERT statements for tables with auto-increment columns will not be batched.

Currently, Statement Batching is supported for Microsoft SQL Server, Microsoft Azure, Oracle and Postgres and will not be applied when using other back-ends.

- Batching Threshold - with this setting you can set the maximum number of similar statements which should not be batched. The default value is 1.

- Batching Size - with this setting you can specify the maximum number of statements which a single batch can contain. The default value is 100.

- Medium Trust - if set to True, allows Telerik Data Access to work in a medium trust environment (ASP.NET). Under medium trust, some operations cannot be performed and the Telerik Data Access runtime must be made aware of this. This affects things where privileged operations are needed.

- Multithreaded Context - supports multiple threads accessing one OpenAccessContext, i.e., one OpenAccessContext maybe used from more than one thread at the same time. Enabling the support for concurrent threads will turn on additional synchronization, which can affect the performance.

- Use UTC for DateTime - specifies whether to use Coordinated Universal Time format. The default value is False.

- Nulls First Sort - controls whether the database will use the NULLS FIRST sorting option (when you order by a certain column the position of the records that have null in that column). Not all database servers support this option. (For SQL server it is the default behavior – in oracle nulls are at the end so you have to set it to true to have the same behavior as sql server). The default value is True.

- Return Null on RowNotFound - controls whether null is to be returned when an object references an object that does not exist in the database by foreign key. When false RownNotFoundException is being thrown.

-

Use Cascade Delete for table hierarchies - in cases when a domain model contains classes in vertical inheritance, this setting notifies Telerik Data Access whether to issue additional DELETE statements targeting the derived classes when objects from base classes are deleted.

Changing the state of Use Cascade Delete for table hierarchies requires you to synchronize the domain model and the database with the Update Database from Model wizard. This will recreate the constraints with the appropriate setting for cascade delete. Skipping this process may lead to performance issues and DataStoreException during runtime.

- If it is checked, Telerik Data Access will generate a DELETE statement only for the objects from the tables mapped to the base classes and will rely on the backend to remove the corresponding objects from the related tables. In this state, the setting notifies Telerik Data Access to use cascade delete for all the domain classes that participate in a hierarchy, unless the Use Cascade Delete property for a given domain class is explicitly set to No.

- If it is unchecked Telerik Data Access will attempt to delete explicitly not only records from the base tables but the related ones from the derived tables as well. In this state, Telerik Data Access will use cascade delete only for the domain classes from the hierarchy for which the Use Cascade Delete property is explicitly set to Yes.